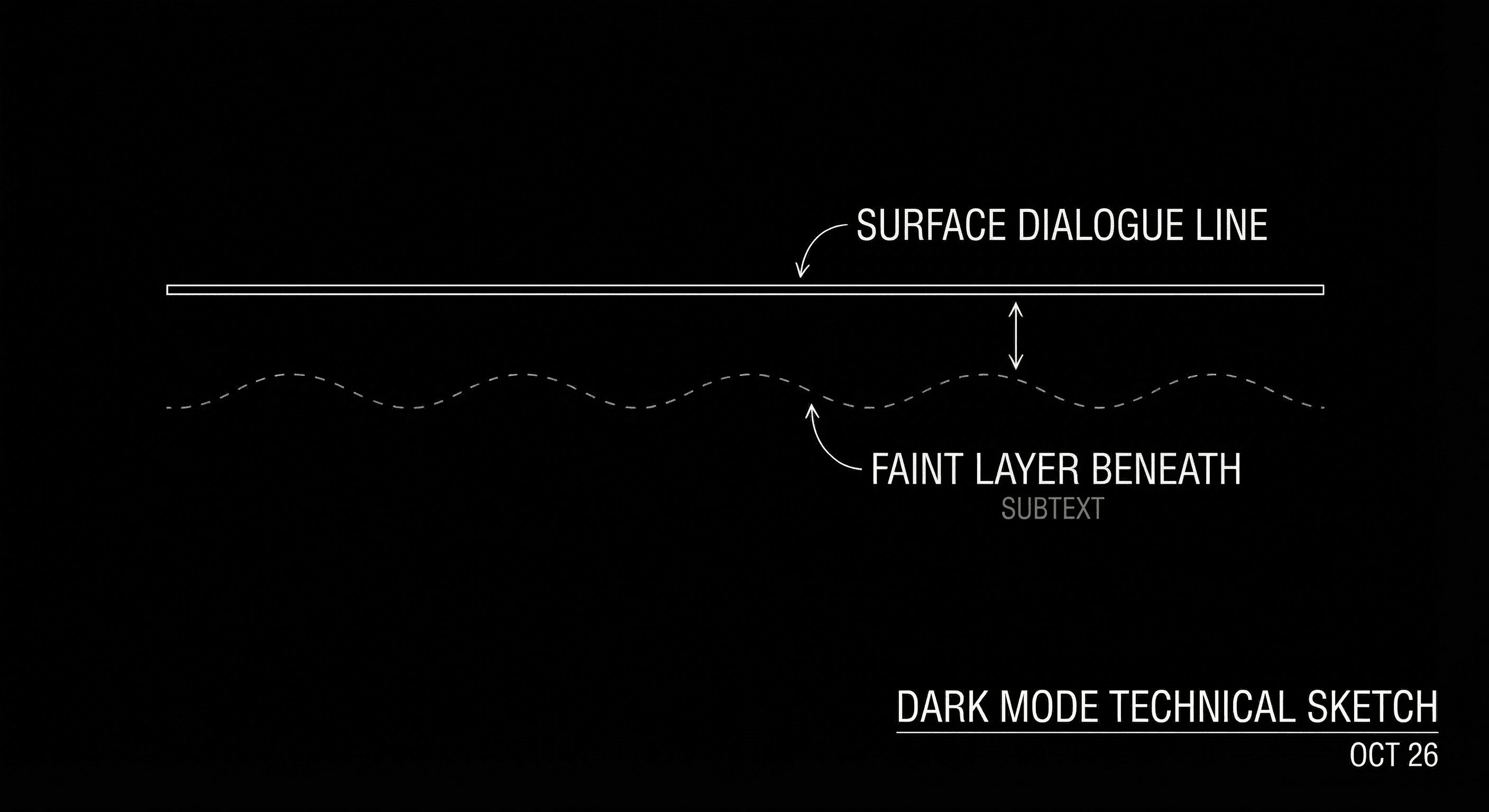

She says "I’m fine." The audience knows she isn’t. That gap, between what’s said and what’s meant, is subtext. It’s one of the hardest things to write. So it’s one of the first things writers ask when they sit down with an LLM: can it write subtext? The short answer is: not in any reliable way. The long answer is worth understanding, because it tells you exactly where to use these tools and where to keep them out of the loop.

Subtext isn’t a line. It’s the space between the line and the situation. Models can mimic the shape of subtext, characters saying one thing while meaning another, but they can’t reliably invent the meaning that makes it land. That has to come from you.

For how to write subtext yourself, see dialogue subtext vs exposition. For the ethics of using AI on script dialogue, ethics of AI in screenwriting draws the line.

What Subtext Actually Is

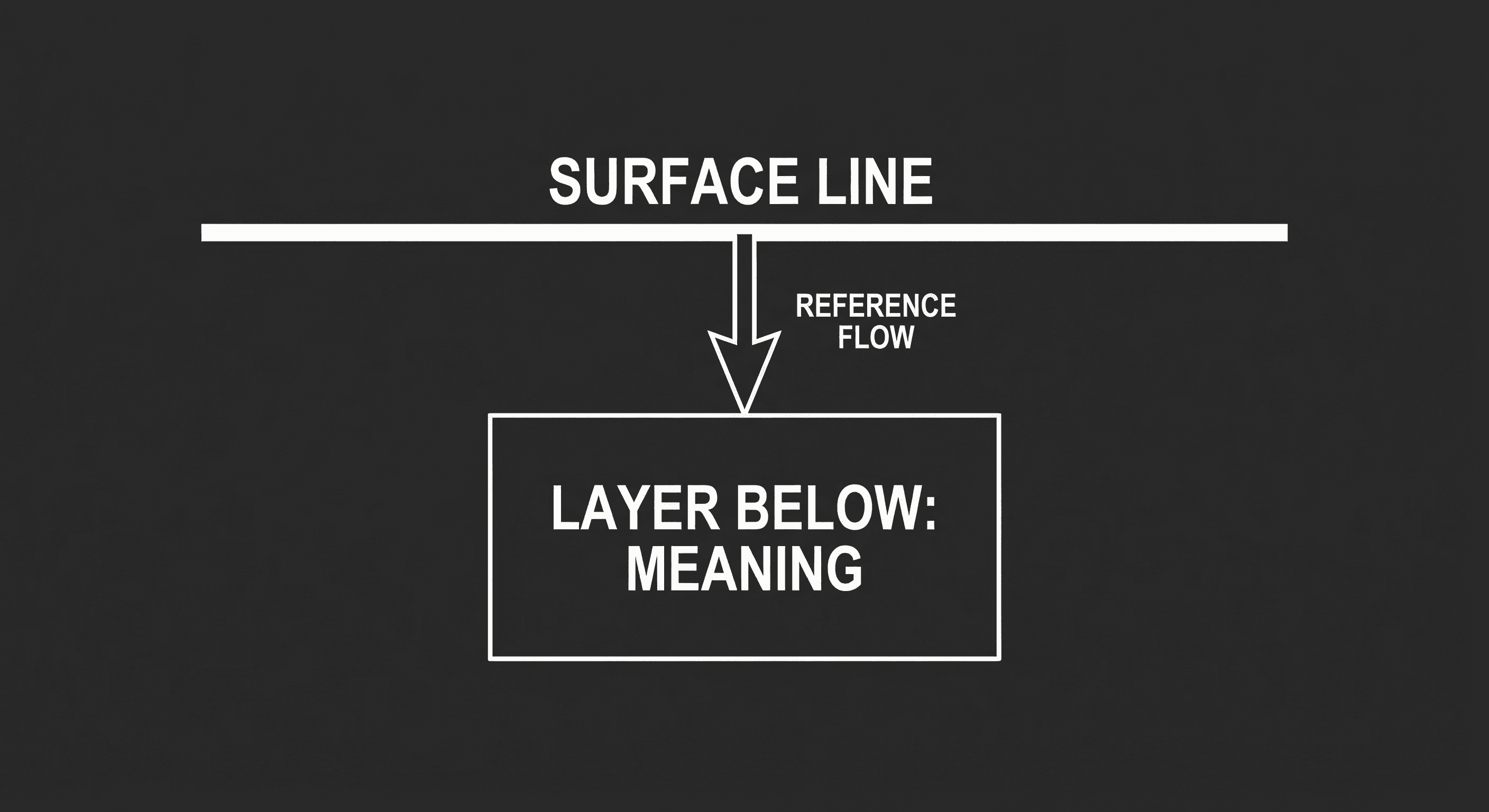

Subtext is the meaning under the text. The character says something; the audience (or the other character) understands something else, or senses that there’s more going on. It works because of context: who these people are, what they want, what they’re afraid of, what just happened. "I’m fine" lands as subtext when we know the character is not fine and is deflecting. Without that setup, "I’m fine" is just a line. So subtext is relational and situational. It depends on the story you’ve built. A model can generate dialogue that looks like subtext, polite surface, tension underneath, but it doesn’t have access to your story’s specific history, your character’s private wound, or the thematic point you’re making in the scene. It’s guessing from patterns. Sometimes the guess is good. Often it’s generic or off. That’s the limit.

Think about it this way. In a scene where two exes run into each other, "You look good" can mean "I still want you," "I’m over you and I’m being polite," or "I’m hurting and I’m pretending not to." The line is the same. The subtext depends on the character, the relationship, and the moment. A model can offer you several versions of "You look good" with different stage directions or follow-up lines. It can’t know which one fits your story unless you’ve given it so much context that you’ve almost written the scene. And if you’ve written the context, you’re the one who knows the subtext. So the model ends up as a thesaurus for possible readings, not the author of the reading you want.

A Simple Test: Same Setup, Different Models

Here’s a test you can run. Take a scenario: two siblings, one just got the promotion the other wanted. They’re at a family dinner. Write one line of dialogue that carries subtext, what’s said, and what’s meant. Then ask an LLM: "Write a short exchange between these two where there’s tension under the surface. They’re at a family dinner. One got the promotion the other wanted." You’ll get something. It might have a workable beat or two. But compare it to what you’d write when you know your version of these siblings, their history, their tells, the specific way this family avoids conflict. The model’s version will tend toward the average of "sibling rivalry at dinner": generic jealousy, generic deflection. Your version can be specific: the way one of them always talks about "luck," or the way the other goes quiet in a particular way. The model doesn’t have that. So it can’t write your subtext. It can only approximate the category "subtextual sibling scene."

That’s the limit. Not that the model never produces a usable line, sometimes it does, but that it can’t be trusted to carry the emotional and thematic weight you need. For scenes where subtext is doing heavy lifting, you have to write it yourself. For more on building that kind of scene, writing dialogue subtext and film noir subtext go deeper.

Where LLMs Help and Where They Don’t

| Task | LLM useful? | Why |

|---|---|---|

| Generate a line that "sounds" tense or polite | Sometimes | It can mimic tone. It can’t reliably tie that tone to your story’s specifics. |

| Suggest alternate phrasings for a line you wrote | Yes | You already decided the subtext. The model is just varying the surface. |

| Write a full scene where subtext carries the scene | No | The model doesn’t have your character history, theme, or intent. Output will be generic. |

| Break a block ("I need something that feels like deflection") | Maybe | You might get a starting point. You’ll still need to rewrite so it fits your character. |

| Polish dialogue you’ve already written for subtext | Yes, carefully | Use as options; don’t accept without checking that the subtext you want is still there. |

So: use models for variation and options on lines you’ve already conceived. Don’t use them to invent the subtext of a scene. The emotional logic has to come from you. For a workflow that keeps you in charge of structure and script, augmented screenwriting avoids the trap of letting the tool author the scene.

Relatable Scenario: The "Polite" Breakup Scene

You need a scene where the couple is breaking up but both are being painfully polite. You know why: she’s already checked out; he’s trying to save face. You prompt the model: "Write a short breakup scene where they’re both very polite but the audience can tell they’re hurting." You get a scene. The dialogue might be serviceable, "I think we both know," "We had a good run." But the specific beats you need, the line that echoes something from act one, the moment he almost cracks but doesn’t, probably aren’t there. The model doesn’t know your act one. It doesn’t know your theme. So you take the premise (polite surface, pain underneath) and you write the scene yourself, or you use the model’s draft as a rhythm reference and replace most of the lines with your own. The subtext that lands is the one you put in. The model gave you a template. You filled it with meaning.

Try it free

Try Screenweaver for free on your script

It is free. Import your existing project, get a clearer view of your outline, and regain control of your story structure in minutes.

Start FreeRelatable Scenario: The Line That Won’t Land

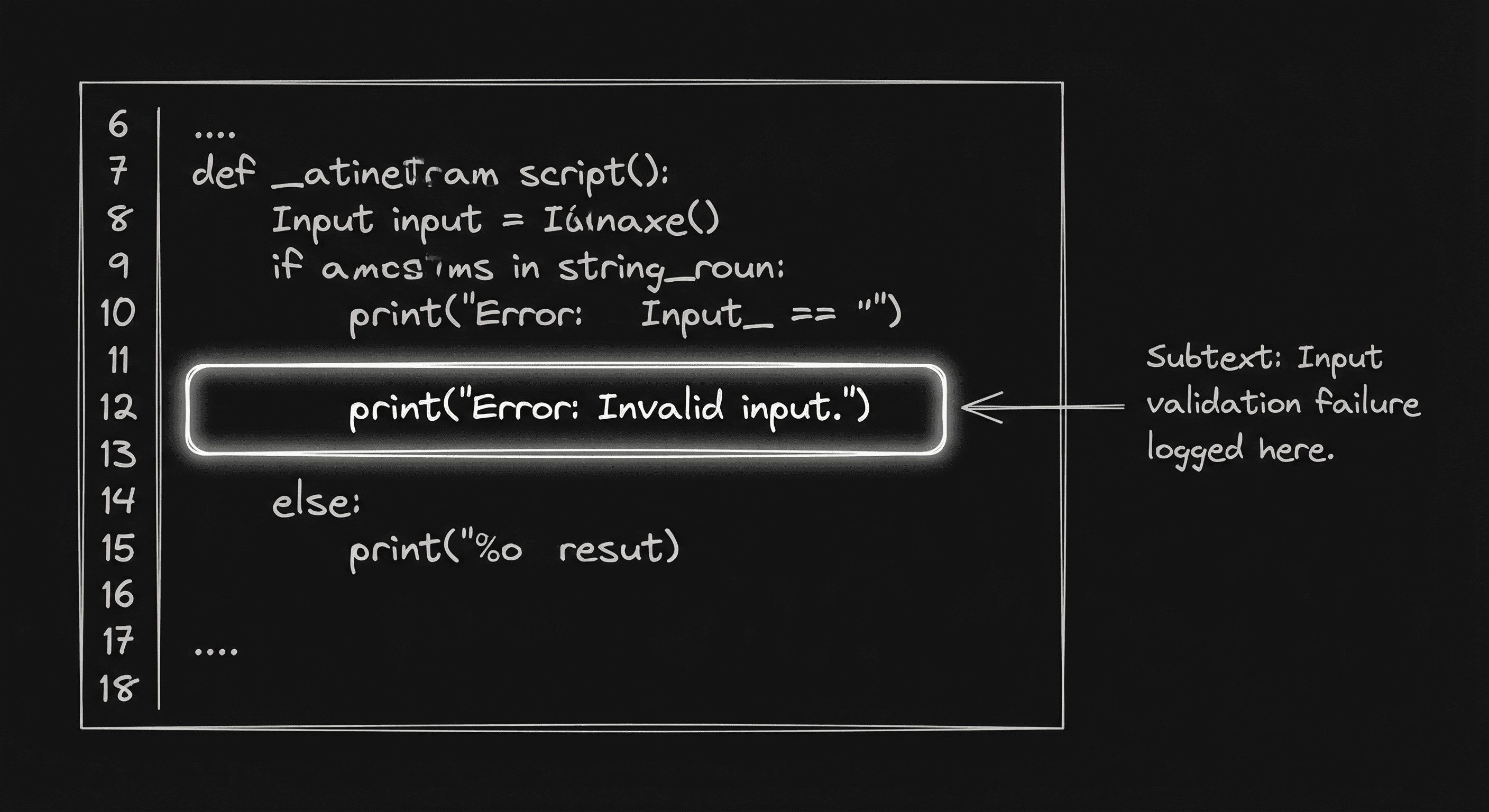

You’ve written the scene. One line is almost right but feels on-the-nose. You paste it into an LLM: "Give me three alternatives that keep the same subtext, she’s deflecting because she’s guilty, but vary the words." Now the model is useful. You’ve already defined the subtext. You’re asking for surface variation. You might get a option that’s better than your first draft. You still need to check that the new line doesn’t change the subtext or step on the next beat. So: when you’ve already decided what’s under the line, the model can help with the line. When you haven’t, the model can’t do it for you.

What Beginners Get Wrong

Asking the model to "write a scene with subtext." You’ll get a scene that resembles subtext, polite dialogue, maybe some stage direction about tension. You won’t get a scene where the subtext is tied to your story. The fix: write the scene yourself, or write a detailed prompt that includes the character dynamics and what you want under the surface. Even then, treat the output as a draft to rewrite.

Assuming "sounds good" means "right." A line can sound plausible and still be wrong for your story. The model might give you a deflecting line that doesn’t match how your character deflects. So always check: does this line carry my subtext, or just a subtext? If it’s generic, replace it.

Using model output for high-stakes emotional beats. The most important scenes, confessions, reversals, goodbyes, depend on subtext that’s been built over the whole script. The model hasn’t read your script. It can’t pay off what it didn’t set up. So for those scenes, write the dialogue yourself. Use the model for low-stakes variation or for breaking a block, not for the climax.

Expecting the model to "get" theme. Subtext often serves theme, the character says one thing while the story is saying another. Models don’t have a representation of your theme. They have patterns. So thematic subtext has to be written by you. The model can’t reliably align dialogue with the argument your story is making.

Giving up on subtext because "AI can’t do it." The takeaway isn’t "don’t use AI." It’s "use AI for what it’s good at (structure, variation, research) and keep subtext in your hands." Your voice is in the subtext. Protect it. For more on voice and dialogue, character voice consistency and dialogue subtext are the next reads.

The Perspective

LLMs can mimic the shape of subtext. They can’t reliably invent the meaning that makes subtext land, that meaning comes from your story, your characters, and your intent. Use models to vary lines once you’ve decided what’s under the surface. Don’t use them to author the emotional logic of a scene. The limit isn’t the technology. It’s that subtext is yours, and that’s the part that’s worth keeping.

[YOUTUBE VIDEO: Side-by-side test, same scenario written by a human vs generated by an LLM; then a rewrite pass showing how to fix the generic "subtext" the model produced.]

For dialogue craft that doesn’t rely on AI, writing dialogue subtext and subtext in film noir go deep. For when AI is useful, using AI for outlining and prompt engineering stay on the right side of the line. The USCO policy on AI-generated material{rel="nofollow"} is the external reference for how copyright treats human vs machine authorship.

The Perspective

Subtext is the gap between the line and the meaning. Models can fill the line. They can’t reliably fill the meaning, that’s tied to your story. So use AI for structure, variation, and research. Keep subtext in your hands. That’s where your voice lives, and where the limits of LLMs are clearest.

Final Step

Build your next script with Screenweaver

Move from ideas to production-ready pages faster with timeline-native writing and AI-assisted story flow.

Try Screenweaver