The tool that writes your email subject line is not the tool that helps you break your second act.

This seems obvious. But the language around both, "AI writing," "generative text," "intelligent composition", collapses a distinction that matters enormously. People talk about AI writing as if there's one thing, one capability, one future. There isn't. There are at least two very different applications, with different purposes, different workflows, and different implications for human creative agency.

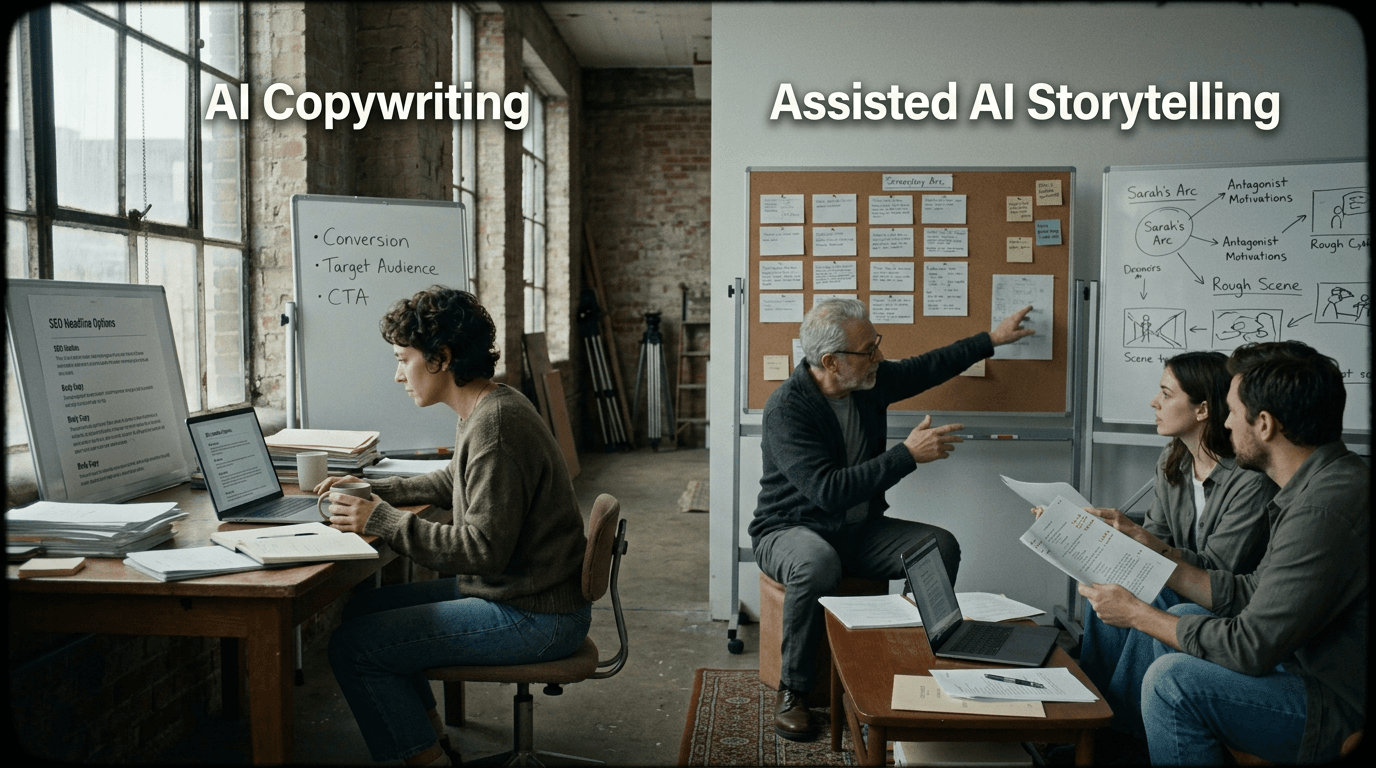

AI copywriting generates short-form, goal-oriented text. Marketing emails, ad copy, product descriptions, social media captions. The text has a job: click, buy, share. Success is measurable. Tone is variable but replaceable. The human's role is often to approve, edit, or discard.

AI storytelling assistance supports long-form, emotionally complex narratives. Screenplays, novels, series bibles, pilot structures. The text has a purpose, to move, to resonate, to mean something, but that purpose isn't reducible to metrics. Success is subjective. Tone is signature. The human's role is authorship; the tool's role is support.

These are not the same thing. Treating them as the same thing leads to two mistakes: screenwriters dismissing all AI tools because they've seen bad marketing copy, and marketers expecting storytelling tools to produce finished scripts with the ease of generating taglines. Neither expectation is correct.

Let's be precise about the difference.

Cinematic workflow frames

These two visuals work as a pair: the first shows Cinematic workflow still, first angle, 35mm film grain, and the second shifts to Cinematic workflow still, second angle, 35mm film grain—compare them briefly, then move on.

What AI Copywriting Actually Does

Copywriting is functional text. It exists to accomplish a goal: get someone to open an email, click a link, fill out a form, remember a product. The measure of good copy is conversion, not beauty.

AI copywriting tools, there are dozens now, generate text that fits these parameters. You input constraints (product name, audience, desired action, tone) and the tool produces options. Ten subject lines. Five ad headlines. Three calls to action. You pick the best, test it, iterate.

This works well because copy is constrained and measurable. The tool doesn't need to understand your brand's soul; it needs to produce phrases that satisfy the input parameters and perform against a metric. If the subject line gets more opens, it's good. If it doesn't, try another.

The human's role in AI copywriting is curatorial. You choose from options. You reject what doesn't fit. You refine inputs until the outputs improve. The writing itself can be, and increasingly is, fully machine-generated. Human judgment matters; human writing often doesn't.

AI copywriting is a slot machine that sometimes hits. You pull the lever, evaluate the result, and pull again.

This is efficient. For businesses generating hundreds of ads, emails, and posts per week, automation isn't just convenient, it's the only way to maintain volume. The human bottleneck isn't creativity; it's velocity. AI solves the velocity problem.

What AI Storytelling Assistance Actually Does

Storytelling is a different beast. A screenplay isn't a funnel; it's a world. It doesn't have a "conversion rate." It has coherence, resonance, meaning.

AI storytelling assistance isn't about generating finished text. It's about helping writers navigate the complexity of long-form narrative. The tool might:

- Brainstorm variations: "Give me ten different ways this scene could end."

- Analyze structure: "Where does my midpoint fall? Does the pacing match expectations?"

- Stress-test logic: "Is there a plot hole if the protagonist knows X by Act Two?"

- Generate placeholders: "Write a version of this dialogue so I have something to react to and revise."

- Surface patterns: "My protagonist hasn't spoken in fifteen pages, is that intentional?"

Notice that none of these generate a finished screenplay. They generate inputs for the writer's judgment. The tool is a sparring partner, not an author.

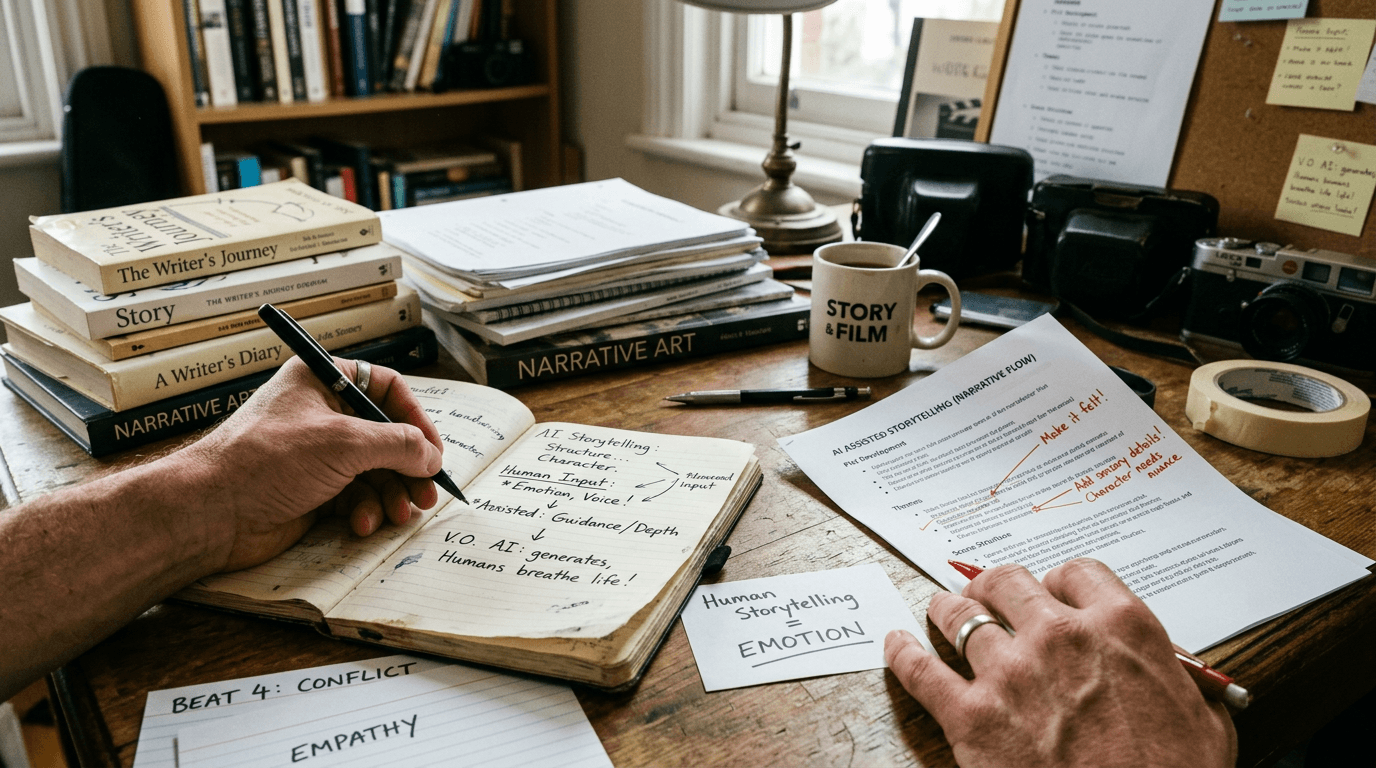

The human's role in AI storytelling assistance is creative. You make decisions. You accept, reject, or transform suggestions. The tool accelerates thinking; it doesn't replace it. The output still bears your signature, because you shaped it.

This distinction matters because storytelling has no objective metric. A screenplay that "converts" (sells, wins awards, finds an audience) is not straightforwardly better than one that doesn't. Taste is subjective. Voice is non-fungible. The value of a story isn't its efficiency; it's its effect on a human being.

A Table of Differences

| Dimension | AI Copywriting | AI Storytelling Assistance |

|---|---|---|

| Text length | Short-form (lines, paragraphs) | Long-form (scripts, outlines, bibles) |

| Success metric | Measurable (clicks, opens, conversions) | Subjective (emotional impact, critical reception) |

| Human role | Curatorial, select from outputs | Creative, shape with inputs and outputs |

| Tool output | Often final (or near-final) text | Inputs for revision and decision |

| Authorship | Tool-generated, human-approved | Human-authored, tool-supported |

| Tone | Variable by brand, replaceable | Signature, non-fungible |

| Stakes | Low per item (volume compensates) | High per work (each piece matters) |

| Replacement concern | Realistic, many jobs will automate | Unlikely, tools assist, don't replace |

These aren't value judgments. Copywriting isn't lesser than storytelling; it's different. A great tagline is as hard to write as a great logline, maybe harder, given the constraints. But the tools that support these tasks work differently, and confusing them leads to misapplied expectations.

Why Screenwriters Distrust "AI Writing"

Much of the skepticism screenwriters express about AI comes from seeing AI copywriting in action and assuming storytelling works the same way.

They see GPT generate a marketing email in four seconds. They see tools produce listicles, product descriptions, and social posts. The output is competent, generic, and soulless. They extrapolate: if this is AI writing, then AI writing has nothing to do with real storytelling.

And they're right, about copywriting. They're wrong to extend that conclusion to storytelling assistance.

The tools used for narrative work don't operate the same way. They're not trying to produce a finished screenplay in four seconds. They're trying to help a writer generate options, diagnose problems, and move faster through the hard parts of story development.

The confusion is understandable because the underlying technology, large language models, is similar. But the application is different. A hammer and a scalpel are both made of metal; that doesn't make them interchangeable.

The tool that writes your Instagram caption has nothing to do with the tool that helps you restructure Act Two.

Screenwriters who dismiss all AI tools because they've seen bad copy are missing an opportunity. The assistance available now, structural analysis, brainstorming, dialogue iteration, can accelerate work without compromising voice. But only if the writer understands the tool's purpose.

Why Marketers Overestimate Storytelling Automation

The opposite mistake is equally common. Marketers who've used AI copywriting successfully sometimes assume that storytelling can be automated the same way. They see the technology improving, extrapolate forward, and conclude that "AI screenwriters" are around the corner.

This underestimates what storytelling requires.

A marketing email succeeds when it fits the slot, right length, right tone, right action. A screenplay succeeds when it creates an experience, emotional resonance, surprising inevitability, characters who feel real. These are not the same challenge.

AI can generate text that fits parameters. It struggles to generate text that means something to a human being. Meaning emerges from coherence, subtext, voice, and a thousand small decisions that accumulate over a hundred pages. The model can produce text that looks like a screenplay. Producing text that works like a screenplay, that's a different problem.

The analogy would be assuming that because a machine can compose a jingle, it can compose a symphony. The jingle is constrained, the symphony is vast, and the constraints that make the jingle achievable don't apply to the symphony.

A Workflow Comparison: How Each Tool Integrates

Let's look at how these tools fit into actual work.

Copywriting Workflow:

- Define goal: "Increase open rate on weekly newsletter."

- Input constraints: "Audience: lapsed subscribers. Tone: casual, urgent. Product: new feature release."

- Generate options: Tool produces fifteen subject lines.

- Select and test: Human chooses three, runs A/B test.

- Iterate: Winner is selected; tool parameters refined for next campaign.

The human's involvement is minimal and strategic. The tool does the writing. The human does the evaluation.

Storytelling Assistance Workflow:

- Identify problem: "My second act drags. I don't know where the tension goes."

- Input context: Feed the tool your outline or first act.

- Request brainstorm: "Give me ten ways to escalate conflict mid-script."

- Evaluate options: Human reads suggestions, rejects eight, finds two interesting.

- Develop: Human writes the actual scenes, using the suggestions as direction.

- Iterate: Tool analyzes the new pages, suggests refinements; human decides.

The human's involvement is continuous and creative. The tool supports. The human writes.

Try it free

Try Screenweaver for free on your script

It is free. Import your existing project, get a clearer view of your outline, and regain control of your story structure in minutes.

Start FreeThree Realistic Scenarios: Correct and Incorrect Tool Use

Scenario A: Copywriter Uses AI Correctly

A freelance copywriter needs to write 200 product descriptions for an e-commerce client. Each description is 50–100 words. The products are variations on the same theme (kitchen utensils). The copywriter uses a tool to generate drafts, reviews for accuracy and brand fit, and delivers.

Why this works: Short text, clear parameters, high volume, measurable quality (product sells or doesn't). The tool is doing what it's built for.

Scenario B: Screenwriter Uses AI Incorrectly

A screenwriter pastes their logline into a language model and asks for a full screenplay. The model produces 110 pages of dialogue and action. The writer reads it, finds it generic and inconsistent, and concludes that AI "doesn't work."

Why this fails: Asking a tool to generate a finished screenplay bypasses the human judgment that makes screenplays work. The tool isn't designed to produce ninety minutes of emotionally coherent narrative. It's designed to produce text based on probability. The writer used the wrong tool for the wrong purpose.

Scenario C: Screenwriter Uses AI Correctly

A screenwriter is stuck on a B-plot. They input their outline and ask: "What are five ways the sister's subplot could intersect with the main plot by the midpoint?" The tool generates ideas, some bad, one intriguing. The writer develops the intriguing one into a scene.

Why this works: The writer used the tool for brainstorming, not writing. The output wasn't taken literally; it was transformed into something original. The tool accelerated thinking without replacing it.

The "Trench Warfare" Section: Where Confusion Creates Problems

Failure Mode #1: Treating Story Suggestions as Final

A writer asks for dialogue suggestions, receives competent but generic lines, and pastes them into the script without revision. The draft reads flat. The voice is inconsistent.

How to Fix It: Suggestions are raw material. Always revise. A line from the tool should be no more "finished" than a first-draft line from yourself. The edit is where the voice emerges.

Failure Mode #2: Dismissing Brainstorming Because Copy Is Bad

A writer tries a general-purpose AI tool for marketing copy, finds the results soulless, and concludes that AI has nothing to offer screenwriters.

How to Fix It: Use tools designed for storytelling assistance, not general copywriting. Evaluate the tool on narrative tasks, brainstorming, structure analysis, dialogue iteration, not on marketing output.

Failure Mode #3: Expecting Metrics Where None Exist

A producer evaluates an AI-assisted script by asking "What's the success rate?" There is no success rate. Story quality isn't a percentage.

How to Fix It: Accept that storytelling metrics are subjective. Ask readers, do table reads, test with audiences. But don't expect a number that tells you the story "works."

Failure Mode #4: Automating Voice

A showrunner uses a tool to generate dialogue for all characters, producing efficient but homogeneous text. Every character sounds the same.

How to Fix It: Voice is non-automatable. Use tools for structure and brainstorming; write the dialogue yourself, or use the tool only to generate options you'll transform.

Where the Boundary Blurs

There are edge cases, and it's worth acknowledging them.

Some storytelling is commercial and parameter-driven. A holiday movie for a streaming service might follow a formula so tightly that AI could draft large portions of it. The "creativity" isn't in the plot, it's in execution, charm, performance.

Some copywriting is creative and non-fungible. A Super Bowl ad needs genuine wit, surprise, and emotional resonance. The best taglines ("Just Do It," "Think Different") are closer to poetry than to product descriptions.

The categories aren't absolute. But they're useful. Most screenwriting requires human judgment at the core. Most copywriting can tolerate automation at the core. Knowing which you're doing, and which tool is appropriate, saves time and prevents disappointment.

The Perspective: Know What You're Building

The takeaway isn't that AI copywriting is bad and AI storytelling is good. It's that they're different.

If you're building a marketing machine, AI copywriting is a legitimate accelerator. Use it.

If you're building a story that matters, AI storytelling assistance is a legitimate support tool. Use it differently.

The confusion arises when these categories collapse, when marketers assume stories can be automated like ads, or when writers assume assistance means replacement. Neither is true.

The future isn't AI writing everything. The future is humans using AI where it fits, and knowing where it doesn't.

For screenwriters, that means learning to distinguish brainstorming support from final-text generation. It means using tools to think faster, not to stop thinking. It means treating suggestions as kindling, not as fire.

The story is still yours. The tool is just in the room.

[YOUTUBE VIDEO: A comparison of AI copywriting workflows versus AI storytelling assistance workflows, with examples of each application and discussion of appropriate use cases.]

Further reading:

- For practical workflows using language models as brainstorming partners to stress-test plot holes, see our guide.

- If you're using AI to generate logline variations quickly, we cover the method and pitfalls.

- Copywriting best practices are documented extensively at copyblogger.com{:rel="nofollow"}.

Final Step

Build your next script with Screenweaver

Move from ideas to production-ready pages faster with timeline-native writing and AI-assisted story flow.

Try Screenweaver