You're writing a period piece. The dialogue has to sound right. The props, the dress, the way people address each other, all of it carries the weight of "we did the work." So you dig. Archives, primary sources, academic papers. Now there's another option: ask an LLM. "How would a servant address a lord in 1820s England?" "What did a Parisian café look like in 1927?" The answers come back in seconds. Fast doesn't mean right. And right isn't the only thing that matters. The ethics of AI-assisted historical research for period dramas sit at the intersection of accuracy, attribution, and the audience's trust in what they're seeing.

The line isn't "never use AI for research." It's "never present AI output as verified fact without checking it, and know when the check itself has to be human."

Here's the tension. AI can summarize, suggest, and surface. It can also hallucinate dates, invent sources, and blend fact with convention. For a novelist, a wrong detail might slip through. For a period drama on screen, the wrong hat or the wrong turn of phrase can break the spell. Viewers and critics will fact-check. So the ethical use of AI in historical research isn't about banning it. It's about verification chains. Whatever the model gives you, a phrase, a custom, a date, you treat it as a hypothesis. You find a primary or secondary source that confirms or corrects it. If you can't find one, you don't use it. The AI is a starting point. The source is the authority.

Cinematic workflow frames

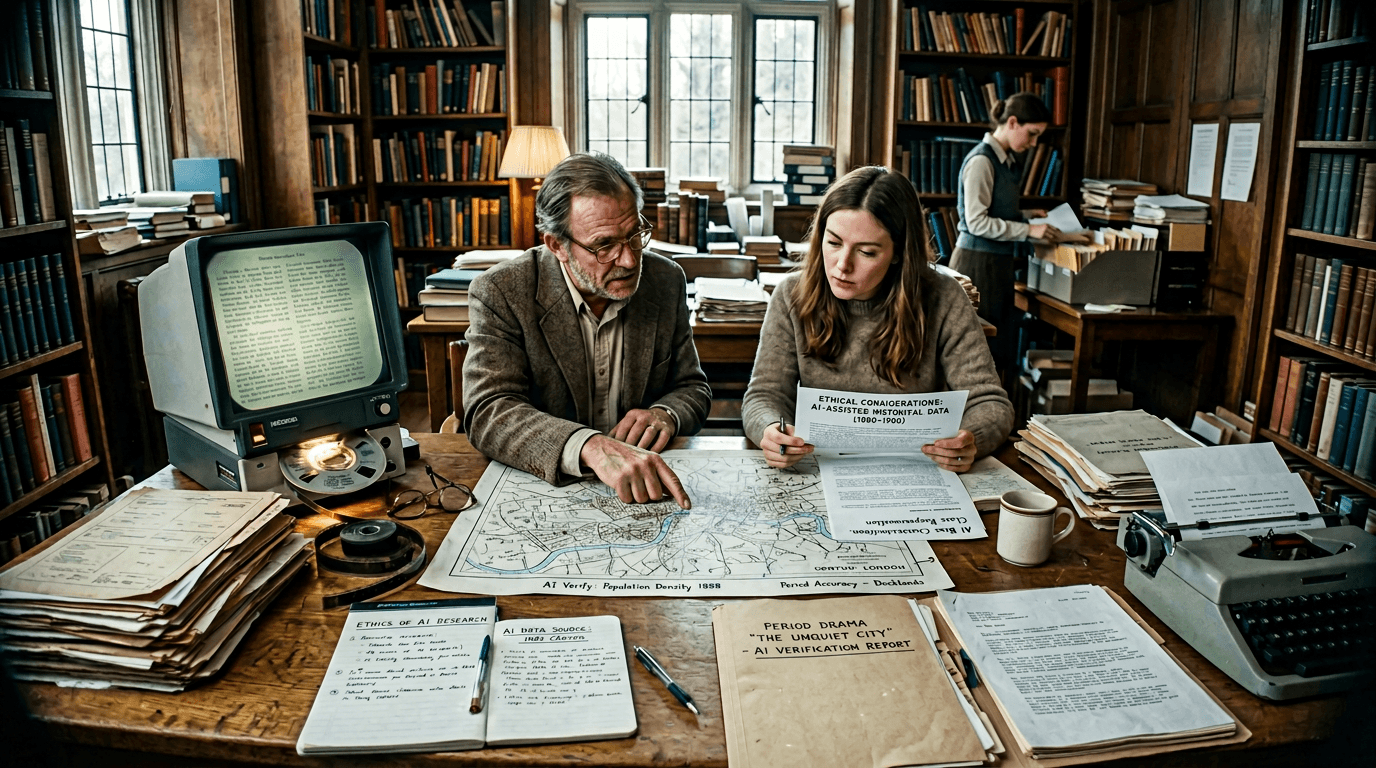

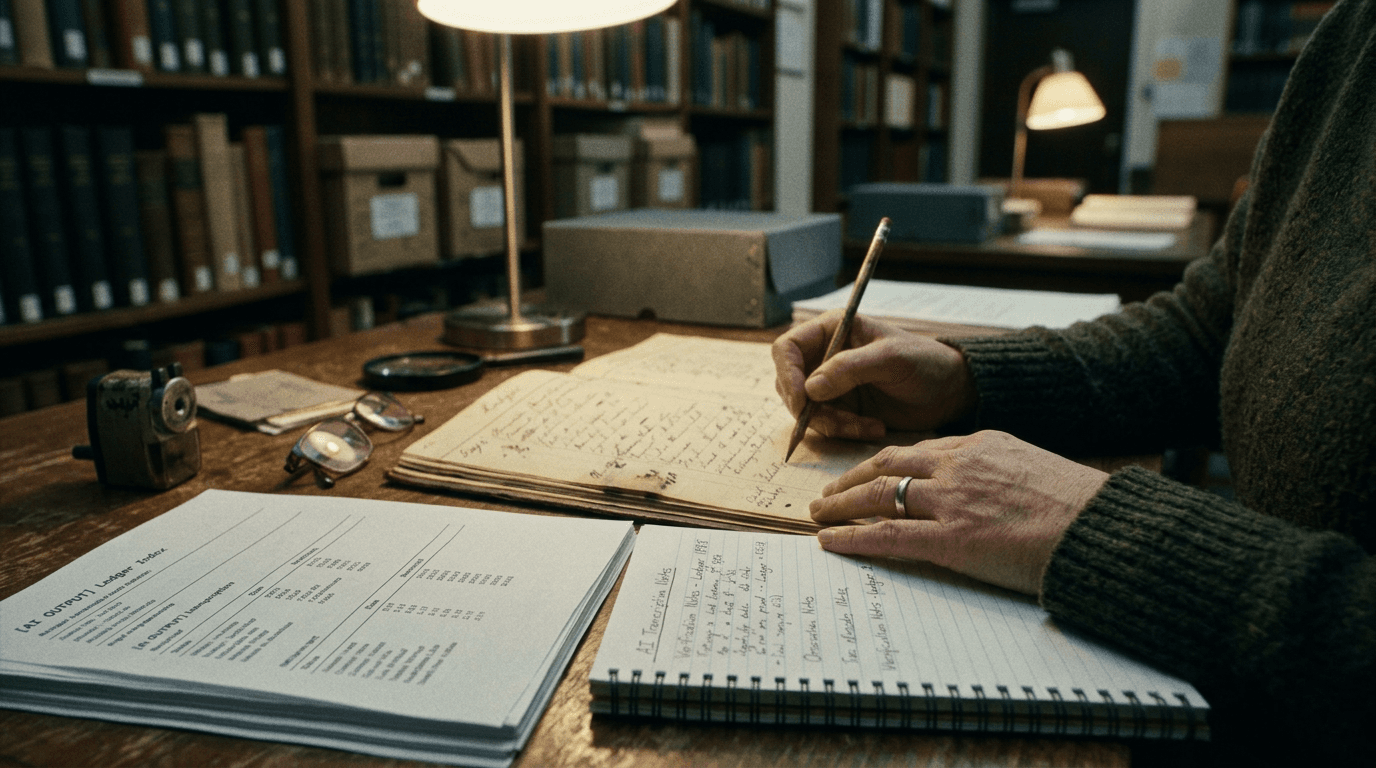

These two visuals work as a pair: the first shows Cinematic workflow still, first angle, 35mm film grain, and the second shifts to Cinematic workflow still, second angle, 35mm film grain—compare them briefly, then move on.

Why Period Drama Is Different

In contemporary fiction, a writer can lean on lived experience and common knowledge. In period drama, the writer is always at one remove. The audience may not know 1820s etiquette, but someone will. Historians, enthusiasts, and critics will notice anachronisms. The production may employ a historical consultant. So the standard isn't "sounds plausible." It's "we can back this up." AI is trained on a mix of accurate and inaccurate material. It has no way to cite. It can't tell you "this comes from a peer-reviewed article" vs "this comes from a forum post." So the moment you use it for historical detail, you take on the responsibility to verify. That's the ethical core: you don't outsource the claim to the machine. You use the machine to generate candidates; you use sources to confirm.

There's a second layer. Attribution and transparency. If a show's research is partly AI-assisted, does the audience or the network need to know? In most cases, the audience cares about the result, does it feel true?, not the method. The network and the guild may care about method for contractual and credit reasons. So the ethical practice is: use AI as a research aid, verify everything you use, and follow any applicable disclosure or credit rules for your jurisdiction and your deal. Don't claim you "did all the research yourself" if you relied on unverified AI output. Don't present AI summaries as if they were primary sources.

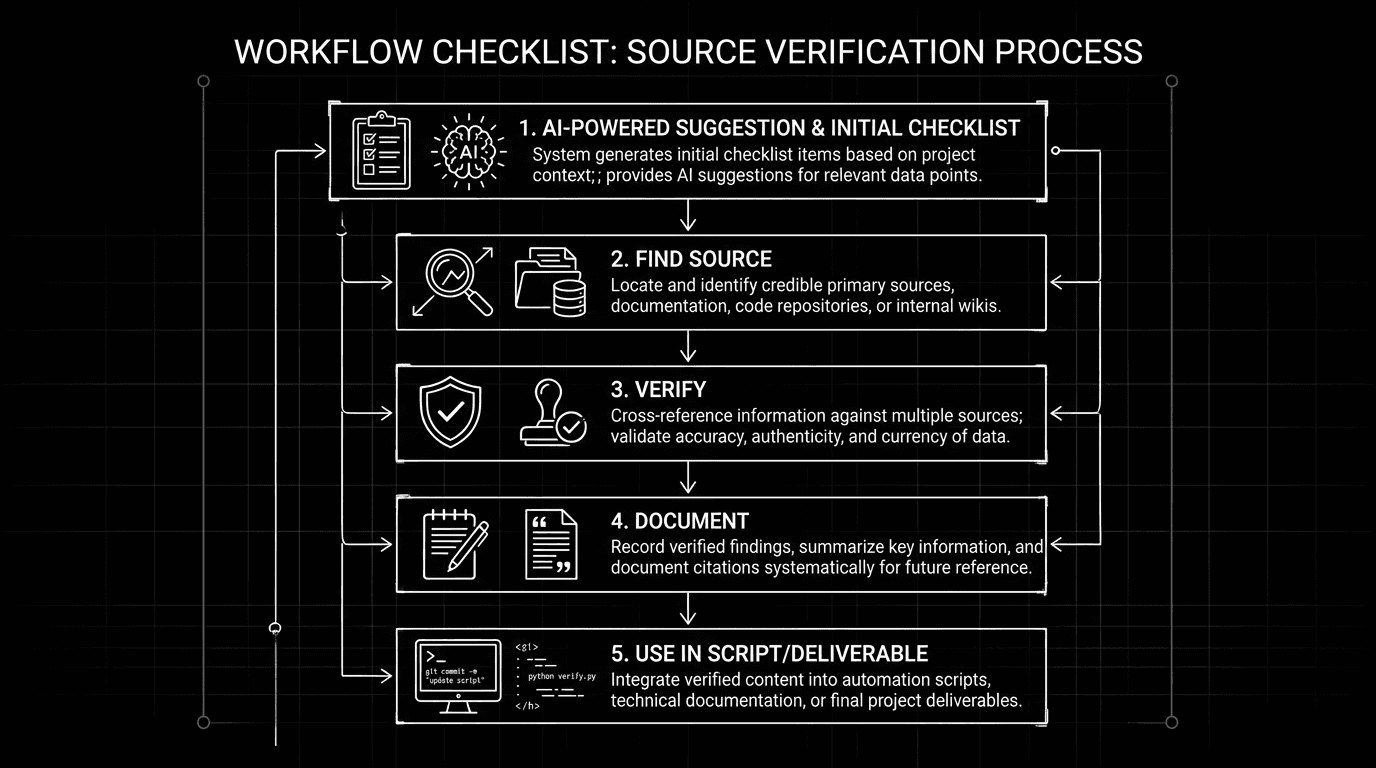

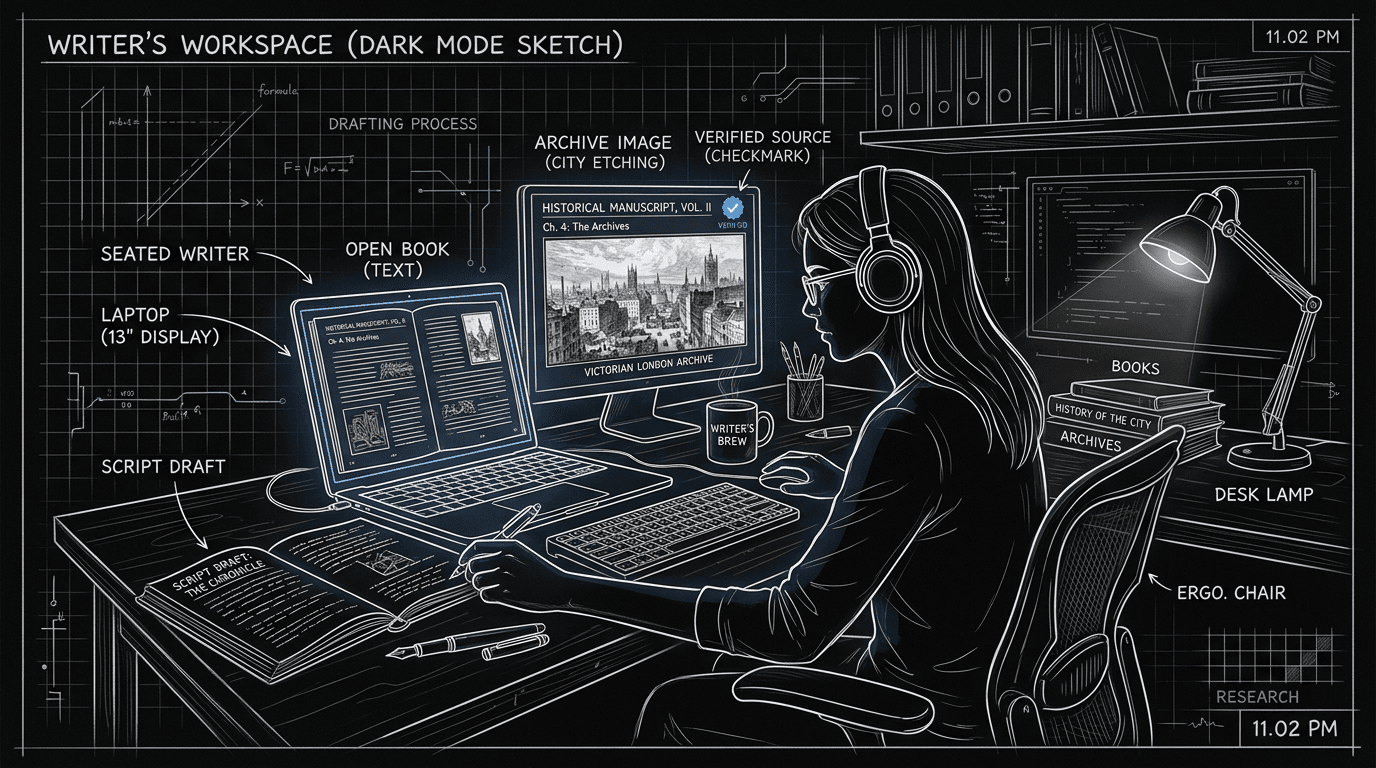

The Workflow: AI as Hypothesis Generator, Not Source of Truth

Step 1: Use AI to generate candidates, not answers. Phrase prompts as "what might have been true?" not "what was true?" Ask for "possible ways a character in X place and time would have said Y" or "common period-appropriate details for Z setting." You're asking for options to investigate, not facts to paste.

Step 2: For every detail you plan to use, find a human source. Book, article, archive, expert. If the AI says "servants often used 'sir' in 1820s England," you find a primary source (letter, diary, conduct book) or a cited secondary source that supports it. If you can't, you drop the detail or replace it with one you can verify. This takes time. It's the non-negotiable step. The AI saved you time generating possibilities. It doesn't save you the verification.

Step 3: Document your sources for key claims. For dialogue, dress, and major historical beats, keep a simple list: Detail / Source / Page or link. That protects you if someone challenges the show and helps the next writer or consultant on the project. It also makes clear that the chain of authority is human, not algorithmic.

Step 4: Know when to bring in an expert. Some questions are too high-stakes for "AI plus Google." Legal procedures, medical practices, or culturally sensitive portrayals may require a paid consultant or an academic advisor. Use AI to prepare questions for the expert, not to replace them.

Step 5: Don't let AI narrow your research. The model tends to return the most common or stereotypical answer. Period drama often benefits from the odd, specific detail that only deep reading turns up. So use AI to get into the ballpark, then read beyond it. The best details often come from sources the model never cited.

| Use AI for | Don't use AI for | Always do |

|---|---|---|

| Suggesting period-appropriate phrases, objects, customs | Final fact without verification | Verify with a human source before using |

| Generating questions to ask an expert | Replacing an expert on sensitive topics | Document source for key claims |

| Speeding up "what might I look up?" | Citing as a source in notes or on screen | Treat output as hypothesis, not truth |

For organizing research alongside your script so nothing gets lost, historical research and script in one place is relevant. For the broader line between human and machine in writing, ethics of AI in screenwriting lays out guild and professional context.

Try it free

Try Screenweaver for free on your script

It is free. Import your existing project, get a clearer view of your outline, and regain control of your story structure in minutes.

Start FreeRelatable Scenario: The Line That "Sounds Period"

You need a servant to refuse a request in a way that's polite but firm, 1880s British. You ask the LLM for three options. It gives you phrases that sound plausible. You pick one. Before you lock it, you search for "servant refusal polite 19th century" and find a conduct manual from 1874 that describes exactly that dynamic, with different wording. You adapt your line to be closer to the manual. The AI got you to the right kind of moment. The manual got you to a defensible line. You cite the manual in your research doc. If someone asks, you have a source.

Relatable Scenario: The Prop That Doesn't Exist Yet

Your script calls for a specific object in a 1920s kitchen. The AI suggests "a mechanical egg beater" and describes one. You're about to put it in the action line when you think to check. You discover the handheld rotary egg beater was common earlier, but the electric version didn't spread until later. You adjust the description to match what would have been there, or you change the beat to a different action. The AI gave you an idea. Verification kept you from an anachronism that a sharp viewer would catch.

Relatable Scenario: The Consultant Who Asks "Where Did You Get This?"

The historical consultant on the production asks about a custom you've written into a scene. You say you ran it by several possibilities and then checked a source. You name the source. The consultant agrees or suggests a tweak. You're in good shape. If you'd said "the AI said so," you wouldn't be. The ethics aren't about hiding that you used AI. They're about never stopping at "the AI said so." The chain ends at a human, verifiable source.

What Beginners Get Wrong: The Trench Warfare Section

Using AI output as fact without checking. The model can be wrong. It can mix eras, invent sources, or reflect bias in its training data. The fix: every detail that goes into the script must be verified against a human source before use. No exceptions for "it sounded right."

Citing the AI or "AI research" as a source. Producers, consultants, and critics expect sources they can look up. The fix: your source list names books, articles, archives, or experts. The AI is a tool that helped you generate hypotheses; it doesn't appear as the authority.

Asking the model "is this accurate?" The model can't verify itself. The fix: don't ask it to. Ask it for possibilities, then verify those possibilities elsewhere. Accuracy is established by human sources.

Skipping verification for "small" details. A single wrong word or object can pull a viewer out. The fix: if it's on the page, it's part of the world. Verify what you use, or cut it.

Letting AI narrow the research to the obvious. The model often returns the most generic period detail. The fix: use AI to get started, then read primary and secondary sources yourself. The best period color often comes from material the model never surfaced.

Ignoring guild or contract rules. Some agreements have clauses about AI use or disclosure. The fix: know your deal and your guild's position. Ethics of AI in screenwriting and copyright and AI-assisted work are starting points; when in doubt, get legal or guild guidance.

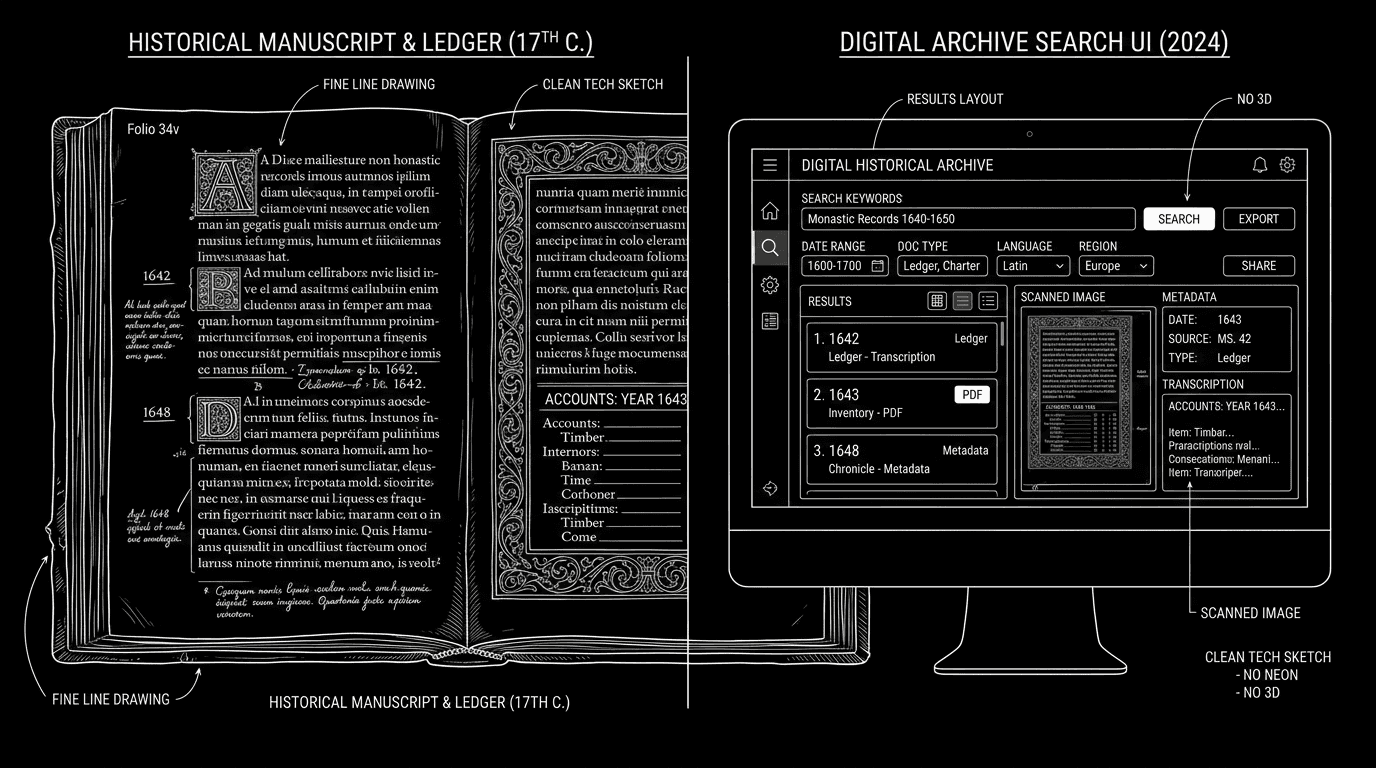

[YOUTUBE VIDEO: Short demo: using an LLM to generate three period-appropriate phrase options, then showing how to verify one of them via a digitized primary source and add it to a research log.]

Software and parameters. Use any general-purpose LLM for hypothesis generation. Phrase prompts in a way that invites options: "Give three possible ways…" or "What might have been typical for…" rather than "What was the fact?" Temperature 0.5–0.7 can help with variety. For verification, use digitized archives, academic databases, and expert consultation, not the model. For more on organizing the resulting research, organizing historical research and script keeps sources and pages in one place.

One External Reference

Academic and archival standards for historical accuracy are set by institutions and peer review, not by tech companies. The WGA’s position on AI{rel="nofollow"} addresses authorship and disclosure; for period work, your verification chain should meet the standard that a historian or consultant would expect.

The Perspective

AI can speed up the "what might I look up?" phase of historical research. It cannot replace the "did we get it right?" phase. The ethics of AI-assisted research for period drama come down to one rule: nothing goes in the script as fact until a human source has confirmed it. Use the machine to generate candidates. Use people and documents to close the loop. The audience's trust in the period rests on that chain.

Final Step

Build your next script with Screenweaver

Move from ideas to production-ready pages faster with timeline-native writing and AI-assisted story flow.

Try Screenweaver